On Your Mark

Restoring the competitive advantage of analytics by integrating data foundation and data science

June/July 2018At leading insurers, data does not just support the business—it drives it. Leaders at these organizations recognize data is a strategic asset that can drive competitive advantage and aid in the quest for customers and top-flight talent. At the same time, data consumers—from internal users to external customers—are more comfortable than ever working with data, and they push the enterprise to deliver impactful data quickly and in an immediately actionable format.

Faced with evolved consumer expectations and an explosion of information, many insurers struggle with operating under this new paradigm. Hampered by aging infrastructure, outdated approaches and a reactive culture, it is a challenge for them to progress at the pace necessary for today’s disruptive environment. Years of underinvestment in modern technology, methodologies and processes have weakened their data foundation to the point that the savviest consumers—like actuaries and data scientists—conduct mission-critical analysis in independent, nonintegrated silos.

To become more data-led, insurers should coordinate and integrate investments in both data foundation and data science programs to deliver more frequent data insights and fundamentally influence the strategy of the business.

The Challenges of Executing Traditional Reporting and Analytics Programs

Traditional reporting and analytics initiatives typically follow one of two paths: data foundation or data science. Data foundation programs, usually driven by information technology (IT), include investments in building or improving data structures, and delivering regulatory, compliance, operational or management reports. Data science programs, led by actuaries and data scientists, focus on using predictive and probabilistic approaches centered on a specific use case. Both paths advance the journey to becoming a data-driven insurer, but each suffers from distinct challenges that hamper the ability to maximize program investments.

Both data foundation and data science challenges have compounded to severely depress the business benefits of delivering traditional approaches to improving data.

Data foundation programs struggle to deliver value quickly because fragmented data architecture makes the program difficult to scale and move at a faster pace. This challenge manifests at the start of initiatives because data sourcing and standardizing is the most challenging exercise and there is a severe lack of understanding of the existing solutions that have evolved over decades, with the knowledge residing with a select few. This hampers the opportunities for IT and business users to form integrated, diversified teams that can deliver results quickly. Instead, the underlying data complexities force projects to follow a narrow scope and traditional delivery methodologies—business users create data sourcing requirements to pass off to IT to develop and implement.

The complexity of data foundations causes problems post-delivery because few users in the organization fully understand the data, which leads to conclusions drawn using incomplete or incorrect information. This reduces the trust users have in the data, which leads to the creation of siloed data stores not integrated into the foundation, exacerbating the complexity.

Another challenge with complex data foundations is that issues are frequently uncovered later in the delivery life cycle, which increases project durations and leads to long, multiyear roadmaps. Some of these programs try to deliver perfect data and, as a result, try and “boil the ocean,” grouping many different challenges under one umbrella. These programs are difficult to administer and maintain, which can lead to diminished momentum and dwindling executive support.

Data science programs also struggle to execute effectively, and the central challenge facing these programs is operational sustainability. Difficulties procuring quality data for the foundation result in significant efforts to acquire and cleanse data. This approach erodes project value as highly-skilled employees spend hours on repetitive, redundant activities rather than analysis. Once this cleanup is complete and the data is ready for analysis, it is frequently stored in a silo outside of the enterprise foundation, making it difficult or impossible to access by other users or integrate into existing business processes. Lastly, insights generated by these programs are rarely fed back to the data foundation or integrated into business processes, which limits their ability to drive incremental and sustainable business value.

According to a survey of more than 2,000 managers conducted by MIT Sloan Management Review and SAS Institute: “The percentage of companies that report obtaining a competitive advantage with analytics has declined significantly over the past two years. Increased market adoption of analytics levels the playing field and makes it more difficult for companies to keep their edge.”1

Restoring Competitive Advantage Through Better Analytics Investments

To return to a more profitable model for investment, insurers need to combine data foundation and data science into a holistic set of capabilities. An integrated approach allows each pillar to concentrate on driving specific value while contributing to a comprehensive approach that delivers repeatable insights.

For the data foundation, the focus should be on developing a platform that enables self-service and exploration while delivering information that provides insights into business profitability. For example, many insurance companies have a combination of active and legacy source systems with convoluted data pathways and overlapping data repositories. In the past, the most common remedy was to invest in heavy data modeling projects to build complex data warehouses to deliver a set of predetermined reports. Driving this approach was an older generation of tools that could not handle the volume and processing power required for more advanced predictive analysis. But newer technologies—like big data platforms and cloud-based infrastructure—have drastically reduced the cost and time for data retrieval. Data foundation projects can now focus on making flatter, less normalized structures—like data lakes and operational data stores—that allow business users, rather than IT, to define how to consume the data. Alongside these architectural improvements, projects can enable advanced data management approaches, like automated data mastering and cleansing routines powered by artificial intelligence (AI), which can help drive quicker, more accurate insights and allow for a culture of self-service. This innovation in technology enables integration of data foundation and data science capabilities on an integrated platform.

Increasing the value of data science investments requires increasing the breadth, depth and diversity of internal and external sources available to actuaries and data scientists through modern data analysis tools allowing them to generate insights quickly. This helps support statistically driven approaches—like predictive modeling and scenario analysis—which can use uncleansed data to deliver insights. But to fully accelerate value capture of data science investments, obtained insights need to be integrated back into the data foundation through institutionalized and repeatable processes to which a high quality data foundation is critical. This process helps these insights take advantage of next-generation technologies like robotic process automation (RPA) and cognitive engagement that improve results over time.

Operationalizing Data Foundation and Data Science Initiatives

Integrated data foundation and data science initiatives should be executed within a common operating model for analytics. Shifting to more agile, integrated approaches will be radically different for some insurers, so care is needed to reduce the disruption to current processes and help put the right people in the right roles. It is critical that leadership from both business and IT champion the effort and implement the project from a joint perspective.

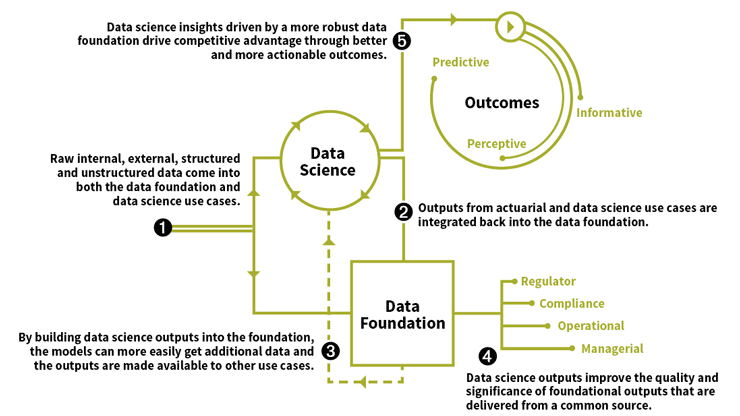

Instantiating an integrated operating model will help fund future improvement to data foundation and data science initiatives. With traditional approaches it is difficult to secure funding for needed data foundation work because of the large costs frequently involved and the difficulties of being able to tie back that investment to discrete business benefits. Data science programs operating in silos also struggle for support because demonstrating how anticipated insights can be shared across the enterprise is opaque and indirect. Integrating the outcomes from data science use cases back into the data foundation, a causal relationship is created and a self-referential loop of investments and benefits can power future initiatives. See Figure 1.

Figure 1: Integrating Data Science and Foundation for Competitive Advantage

Copyright © 2017 Deloitte Development LLC. All rights reserved.

Getting There: Building the Wins for the Program

While it can be difficult to enact these changes, the imperatives for insurers are clear. To help build a future-focused analytics function, three key themes are critical:

- Define program integrations from the start. Before executing any projects, clearly define the scope and understand how it will enable both the data foundation and data science together. Encourage program leads to continually work toward simplifying foundational complexity, and eliminate analysis executed in nonintegrated platforms. Consider using interactive, participant-driven workshops and labs to secure buy-in and help build messages that sustain momentum.

- Improve the pace of change. Employ agile methodologies that deliver incremental value in short, defined periods that demonstrate progress and build excitement. This will better maintain momentum and give more periods for reflection on delivering business value.

- Track investment of required resources. Secure and maintain leadership support for integrated data programs. Use a continually updated stakeholder matrix to capture the program champions and identify who needs to be engaged more directly. Lean on the program champions to identify and make available their top resources to support programs with dedicated hours and budget.

As other industries have demonstrated, there is an early mover advantage awaiting insurers that find ways to increase their data savviness. According to Gartner’s “Hype Cycle for Digital Insurance,”2 fewer than 5 percent of insurers are well positioned to leverage full benefits from data science. Working now to integrate a robust data foundation and impactful data science programs into a common operating model for analytics can provide a powerful competitive advantage. User demand for data shows no signs of slowing, so starting the journey now can help put insurers on a path to future success.